Methodology

Main Motivation

In the last decade, increased availability of affordable robotic manipulation systems like robot arms and grippers opened new perspectives for various applications. However, the lack of capabilities to interpret working and environmental conditions due to missing intelligence of these systems makes them unreliable in real-life usage.

A key challenge of intelligent robotics is to create robots that are capable to directly and autonomously interact with and manipulate the world around them to achieve their goals. Learning is essential to such systems, as the real world contains too many variations for a robot to have in advance an accurate model of human requests and behaviour, of its surrounding environment and objects, or the skills required to manipulate these. It is of crucial importance to be able to transfer knowledge and abilities from one application to another and from a robotic system to another.

Further aspects are safety and acceptability of the system that must be guaranteed at any time. Robots must detect conditions in which they are incapable to solve problems respecting safety requirements and must be accepted by the human users.

Project Goal and Objectives

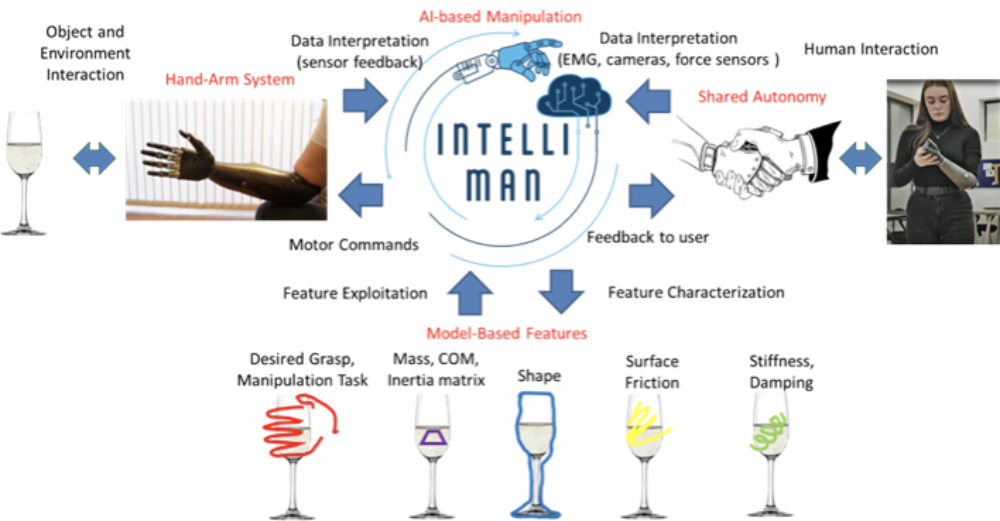

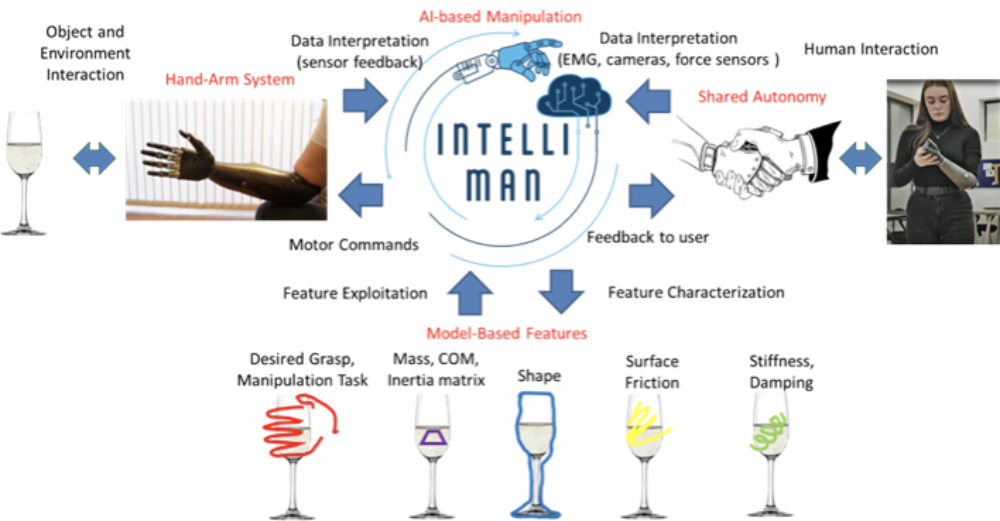

The IntelliMan project focuses on how a robot can learn efficiently to perform tasks in a targeted, high-performance and safe matter.

Main Goal

To enable next generation robots to efficiently learn how to interact with and manipulate their surroundings in a purposeful and highly performant way, being capable to:

- Perform tasks with limited human supervision.

- Autonomously interact with objects regardless of their material, size and shape.

- Deal with environments that neither it, nor its designers have foreseen or encountered before.

- Platform-independent transfer of knowledge between different systems and domain.

As an additional goal, user perception of such AI-powered robotic manipulation systems and factors enhancing human acceptability are investigated.

Objectives

Development of next-generation robotic manipulation systems, empowered by artificial intelligence that:

- Learn individual manipulation skills from human demonstration.

- Learn abstract descriptions of manipulation tasks suitable for high-level planning.

- Discover functionalities of objects by interaction.

- Guarantee performance and safety via automated, adaptive fault detection and user acceptability.

Use cases as demonstration objectives

The development of various solutions for the interactive object manipulation problem in complex environments is supported by a comprehensive set of application scenarios, bound to create an appropriate mix of challenges and requirements from which a general AI-based solution framework for the manipulation problem shall be generated. The AI-powered manipulation system will be enabled to identify and understand the relevant skills and structures independently from the object shape, size and weight and its surface characteristics, fully exploiting the interaction with the environment and the human, disregarding the interface type of the robot and its manipulation structure. For this reason, the use cases and robotic platforms considered in this project differ significantly. The main focus is to develop general approaches that can be transferred and reused in different scenarios and platforms.

Besides, these use cases will demonstrate the effectiveness of the technologies developed:

-

Upper-limb prosthetics (INAIL, FAU, UNIBO, UNIGE, IDIAP, ETHZ)

Real-life requirements of amputees towards intelligent (upper-limb) prosthesis

- Performing general daily-living activities like manipulating objects, even handling small objects

- Having sensory feedback from the prosthesis

- Regulating force during grasp to avoid slippage of grasped objects

- Alleviating the necessary constant visual attention and cognitive burden for the user while manipulating/grasping objects

- Functional coalescence of the limb prosthesis as an integral part of the human body.

Read more …Use case overall objective

Enhance (physically and functionally) the prosthesis with different sensors (high-density EMG, IMU) and AI-based algorithms to:

- Guarantee grasp stability with a variety of objects and conditions and inform the user about incipient fault conditions, thus reducing the cognitive burden for the user

- Reduce problems due to the misplacement of the EMG sensors guaranteeing a suitable level of performance by introducing general AI-based feature recognition

- Reduce the training time procedure of the AI by employing persistent learning capabilities

- Increase acceptability for amputees by leveraging on adaptive shared autonomy to set the autonomy threshold to a suitable level in the manipulation task structure, decreasing in turn the abandonment of prostheses by improving embodiment and reducing the phantom limb-related complaints

- Demonstrate the capability of transferring manipulation tasks knowledge from other use cases).

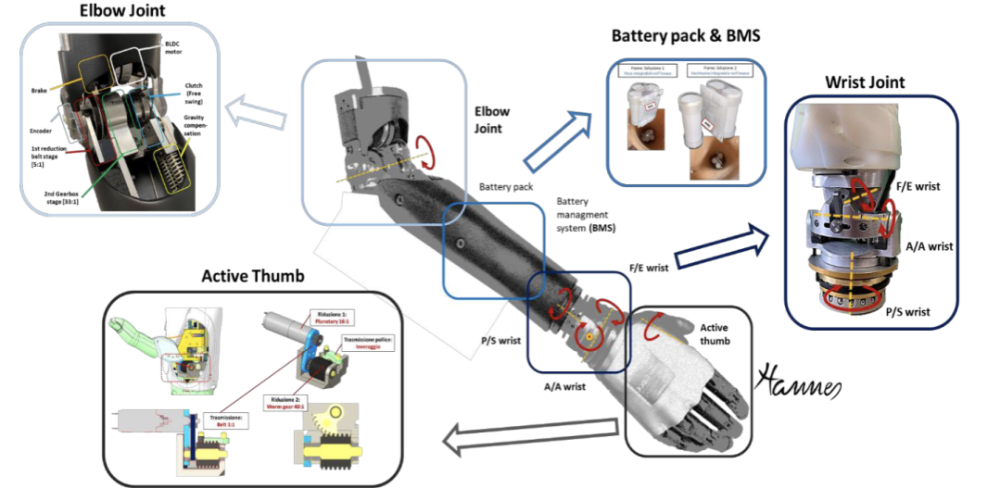

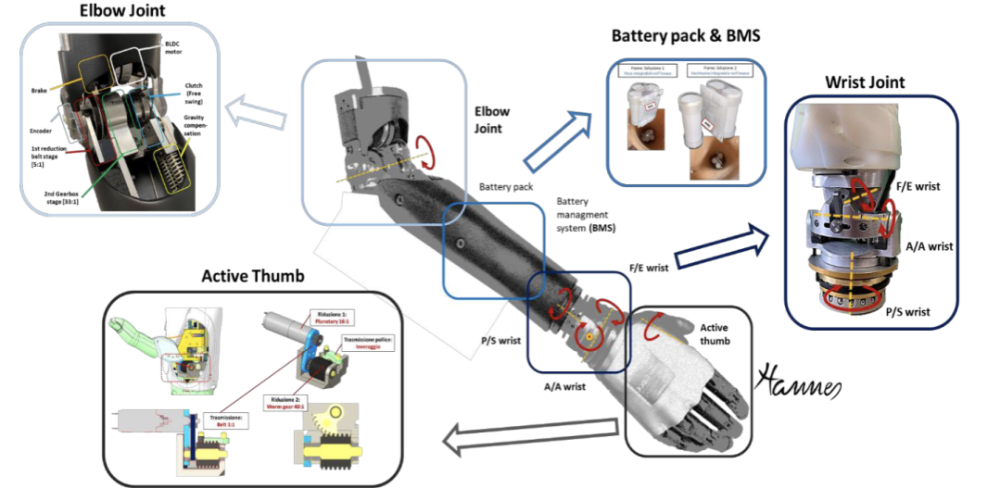

Development platform

Modular arm prosthesis Hannes Arm (adaptive to all levels of amputation).

Proposed solution approach

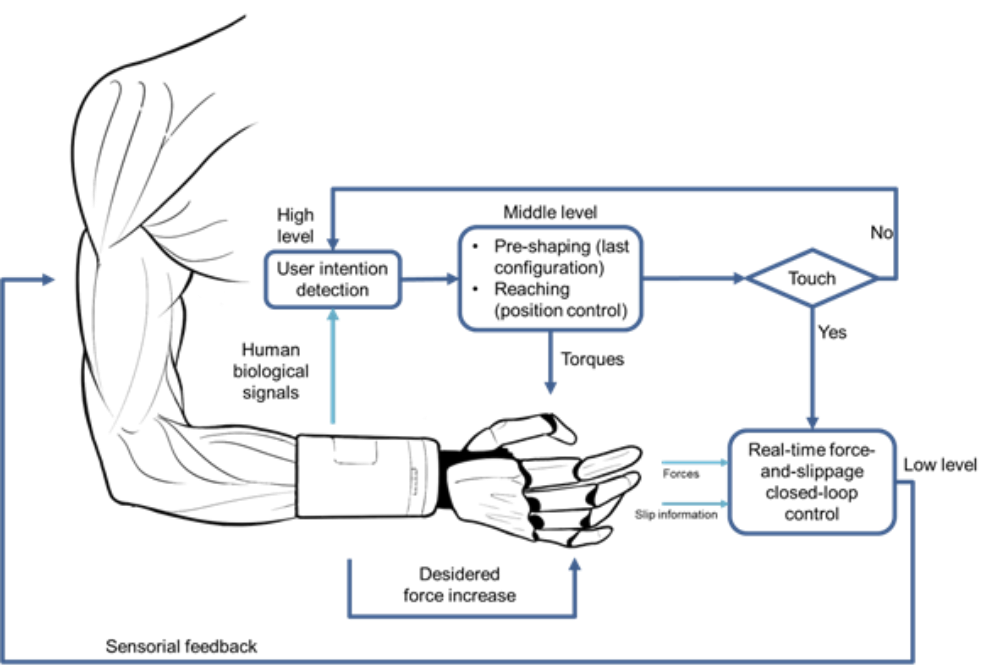

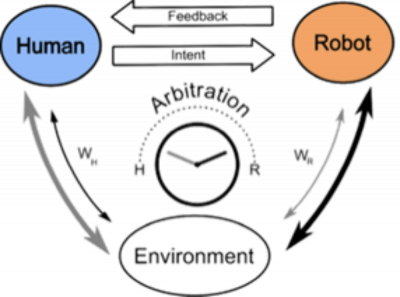

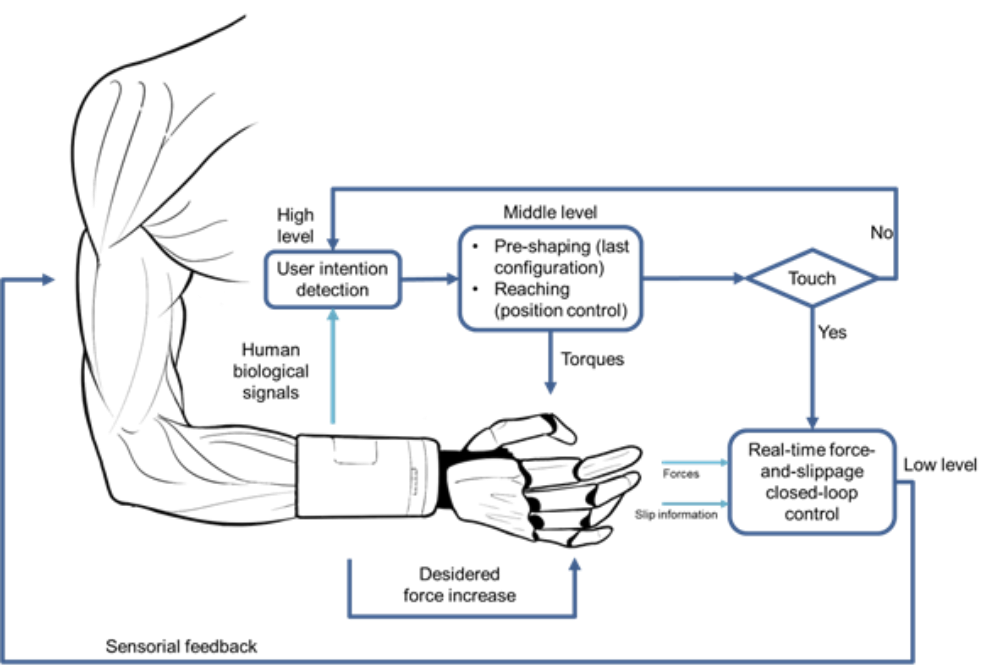

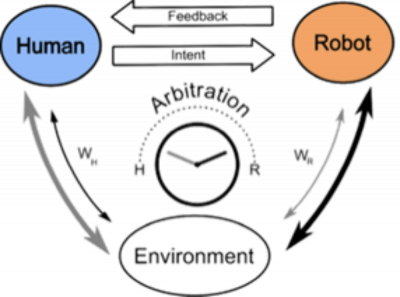

A prosthesis that can interact with the human and the environment in an intelligent and adaptive way through shared autonomy – a framework describing how human and prosthesis exchange information and interact with the environment based on an arbitration mechanism. Task control is assigned either to the human or the prosthesis, depending on capabilities and intentions. Feedback to human is performed of the through haptics. It is expected to leverage safety and reliability of movements and grasping through automatic failure detection and through intent detection algorithms.

Dynamic changes in role arbitration are meant to either increase the prosthesis level of autonomy at the expense of the human’s authority, or, conversely, increase the human’s control over the shared cooperative activity at the expense of the prosthesis autonomy. By shared autonomy, human and prosthesis divide the subtasks of a complex activity (co-activity, used when the human is unable to actuate the prosthesis and must rely on it carrying out intelligently the communicated intent). Arbitration’s supervisory role:

- Manage latency between amputee and prosthesis

- Increase effectiveness and efficiency of the task

- Improve embodiment and acceptability of the prosthesis.

IntelliMan prosthesis control strategy: environment, prosthesis, sensors communicate with the central part, which processes the commands to send to the prosthesis.

Demonstrators to this use case are available here.

-

Daily-live kitchen activities (EUT, UPC, DLR, UNIBO, UNIGE, IDIAP)

Real-life requirements towards household-aid robots

- Perform a set of consecutive and interconnected tasks on sets of objects – retrieve and place these

- Necessary success rate of 80% (without failure-recovery strategies), 60% for the entire scenario, no more than 5% unforeseen object collisions or damage, in less than 10% failure cases require human assistance due to deficiency of failure-recovery strategies.

Read more …Use case overall objective

Expand robotic manipulation capability in daily-life human-centred (kitchen) environment to perform (kitchen-aid) representative tasks:

- Understanding manipulation task structure of fragile objects and planning complex manipulation strategies with one or two coordinated arms, including in-hand manipulation

- Manipulating objects with movable parts and understanding the underlying kinematics

- Synthesizing different task-oriented grasps for objects with different shape, size and surface properties

- Picking objects in a cluttered scenario which may require physically interacting with the environment with non-deterministic results, ensuring reliability by focusing on the detection of feasibility and stability conditions and on relevant features need to accomplish the task and reusing previously learned methods

- Human-Robot Interaction in teaching of the manipulation actions and in robot-to-human handovers (i.e., understanding whether the human is available to receive the object – maybe he/she is doing other things -, where he/she wants to receive it – he/she may offer a hand, and for which part of the object – a knife must be offered with the handle free) or human-to-robot handovers (e.g., handing over an object impossible to reach because out of the robot workspace)

- Demonstrate the capability of transferring manipulation tasks knowledge from and to other use cases (prosthetic aspects of UC1, manipulation of deformable objects from UC3 and manipulation of soft vegetables and fruits from UC4) and other tasks and applications.

Development platform

- Dual-Arm and Single-Arm TIAGo Mobile Manipulators

- Dual-Arm Universal Robots UR5 manipulator on a mobile platform

- Robot arms extended by parallel-finger grippers

- Tactile sensors for perception during grasping

- Kitchen Mockup equipped with OptiTrack tracking system and 3D cameras.

Defined scenario

Prepare breakfast in a mock-up kitchen by:

- Setting the table, retrieving plates and glasses from the dishwasher

- Retrieving cutlery from drawers and place them on the table

- Pouring cereal into a bowl from cupboard

- Handling soft and deformable objects by selecting pieces of fruit and serving them on the plates.

Demonstrators to this use case are available here.

-

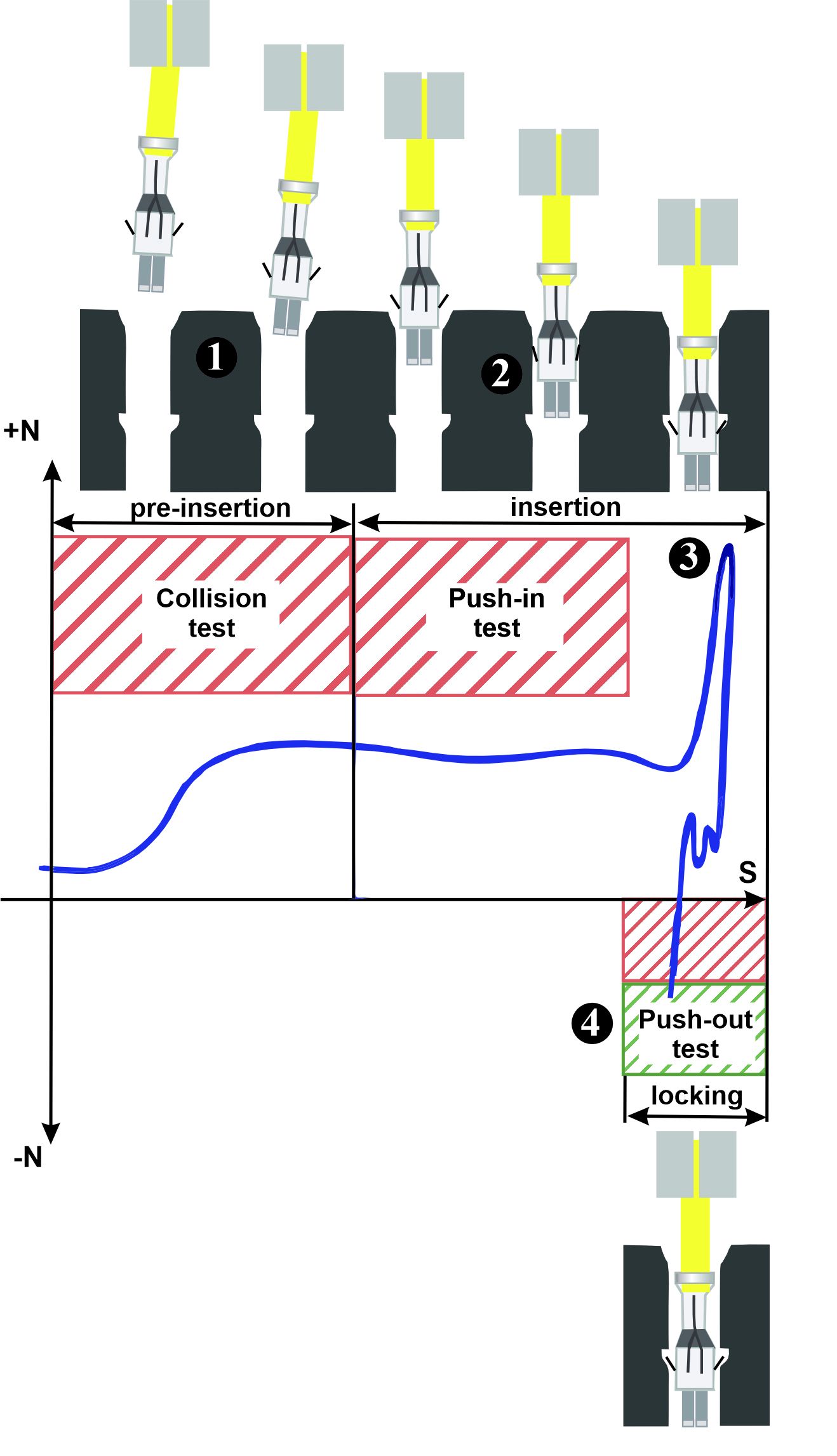

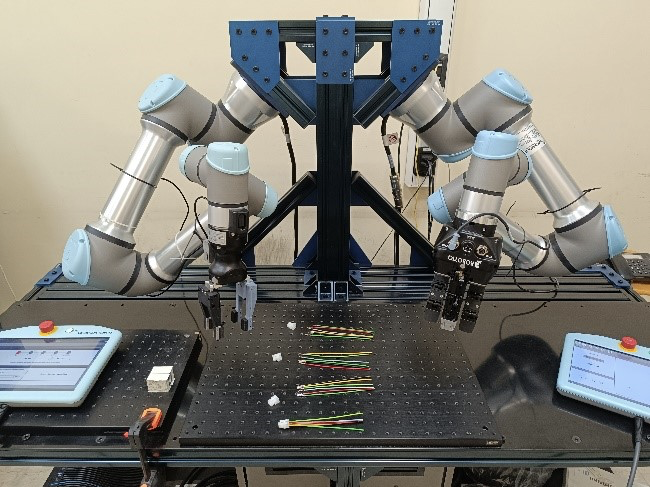

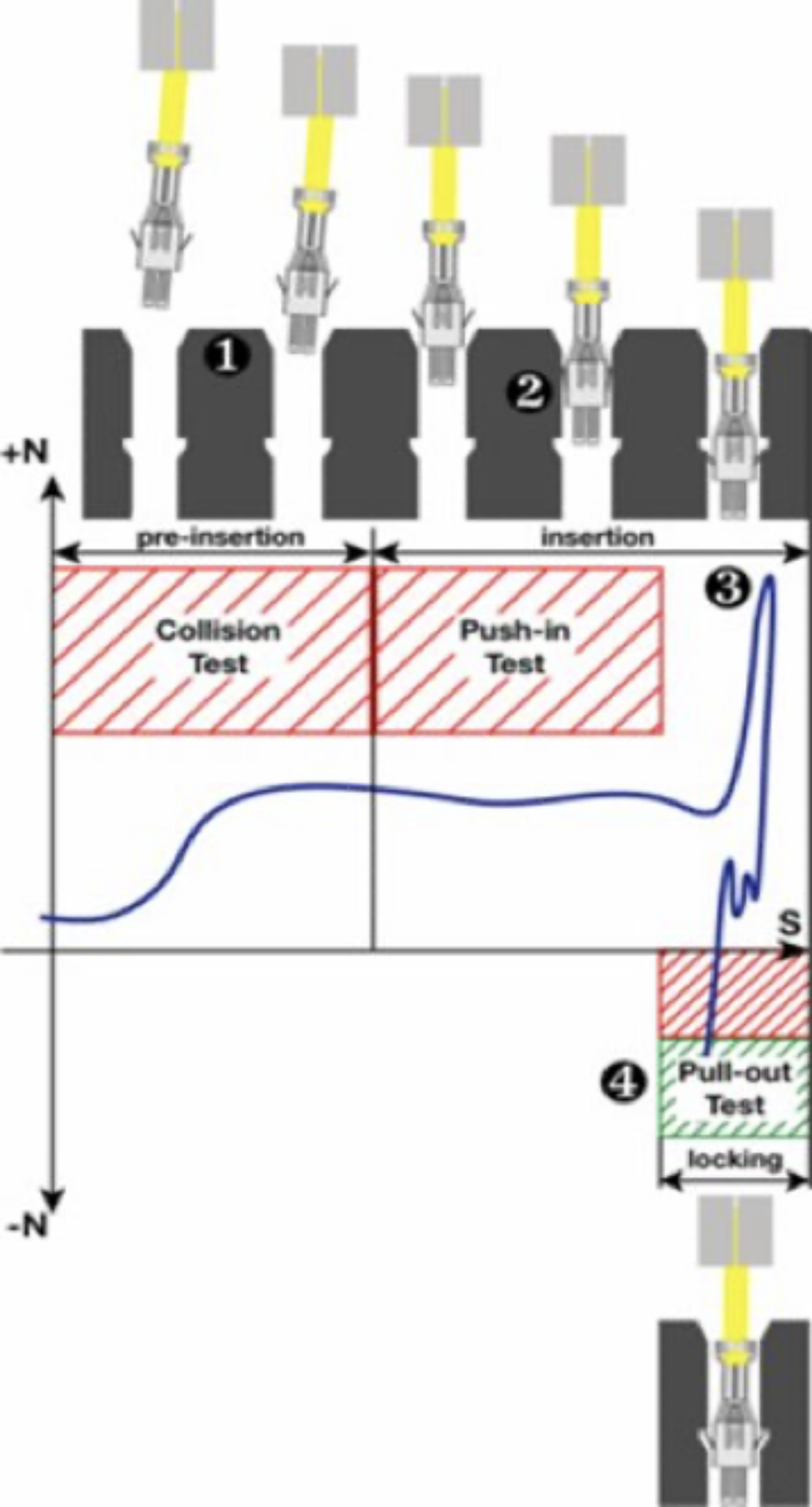

Robot-based assembling of products (flexible manufacturing) (ELVEZ, UNIBO, UCLV, IDIAP)

Real-life requirements towards robot-based product assembling

- Assembling of connectors by intelligent manipulation of small, deformable objects (mainly cables) in interaction with hard objects (connector case)

- Precise interaction control for assembly correctness verification.

Read more …Use case overall objectiv

Enabling the robotic assembly of connectors leveraging on AI-powered manipulation with industrial arms and parallel grippers by:

- Learning the manipulation and picking of small deformable objects with limited trails, such as the cable with metallic terminal located in a collector

- Learning the kinematic relationship and the salient manipulation features between the terminal and the connector case, to insert the terminal with proper orientation and position

- Checking the correct or incorrect execution by evaluating the force profile by means of tactile sensors

- Complete the insertion of all the cables in the connector case a semantic sequence description

- Check the result using both tactile and vision sensors

- Evaluated the capability of transferring the insertion tasks knowledge to other robotic systems.

Development platform

- Dual-arm Universal Robot UR5 collaborative robotic platform

- Robotic grippers: Robotiq Hand-E adaptive grippers and suitable sensorized fingers

- Sensors for cable grasping and evaluation of insertion phase correctness: tactile sensor and ToF proximity or multi-range sensors

- Vision system based on multiple cameras used to detect the location of the objects during the task execution: 2D camera for collecting multiple views for 3D shape reconstruction, 3D camera for depth information reconstruction, endoscopic camera to detect cable axial orientation to perform insertion in connector case.

Defined scenarios

- Connector in a known position, wires grasped from known positions, insertion and checking with a single arm

- Connector in a known position, wires grasped from a collector, estimation of wire pose, insertion and checking with a single arm

- Connector grasped from a known position, wires grasped from a collector, estimation of wire pose, insertion and checking with two arms

- Connector grasped from a collector, estimation of connector pose, wires grasped from a collector, estimation of wire pose, insertion and checking with two arms.

Demonstrators to this use case are available here.

-

Robotic fresh food handling for logistic applications (OCADO, DLR, UCLV, UNIBO, UPC, IDIAP)

Real-life requirements towards robot-based fresh food handling

- Picking loose unpackaged fruits and vegetables – perishable, delicate and easily damaged, different range of stiffness depending on the item’s ripeness, irregular shapes and a range of sizes – from a pile in the storage container and packing those in a small container or punnet

- Handle a broad variety of fruits and vegetables – an extensive spectrum of challenges for robotic grasping and manipulation.

Read more …Use case overall objective

Expand the capability to the robotic pick and place of loose fragile items like delicate fruits and vegetables:

- Study the use case for various objects with increased complexity of shape, fragility and clutter

- Enabled to picking from a dense environment – pile of fruits in the storage container

- Capable of packing in a punnet or a small container to achieve required fill or number of items

- Reduce damage during picking and discard already bruised items

- Learn and apply human picking and packing strategies.

Development platform

to be added soon

Proposed solution approach

to be added soon

Defined scenarios

to be added soon

Demonstrators to this use case are available here.

-

Upper-limb prosthetics (INAIL, FAU, UNIBO, UNIGE, IDIAP, ETHZ)

Real-life requirements of amputees towards intelligent (upper-limb) prosthesis

- Performing general daily-living activities like manipulating objects, even handling small objects

- Having sensory feedback from the prosthesis

- Regulating force during grasp to avoid slippage of grasped objects

- Alleviating the necessary constant visual attention and cognitive burden for the user while manipulating/grasping objects

- Functional coalescence of the limb prosthesis as an integral part of the human body.

Read more …Use case overall objective

Enhance (physically and functionally) the prosthesis with different sensors (high-density EMG, IMU) and AI-based algorithms to:

- Guarantee grasp stability with a variety of objects and conditions and inform the user about incipient fault conditions, thus reducing the cognitive burden for the user

- Reduce problems due to the misplacement of the EMG sensors guaranteeing a suitable level of performance by introducing general AI-based feature recognition

- Reduce the training time procedure of the AI by employing persistent learning capabilities

- Increase acceptability for amputees by leveraging on adaptive shared autonomy to set the autonomy threshold to a suitable level in the manipulation task structure, decreasing in turn the abandonment of prostheses by improving embodiment and reducing the phantom limb-related complaints

- Demonstrate the capability of transferring manipulation tasks knowledge from other use cases).

Development platform

Modular arm prosthesis Hannes Arm (adaptive to all levels of amputation).

Proposed solution approach

A prosthesis that can interact with the human and the environment in an intelligent and adaptive way through shared autonomy – a framework describing how human and prosthesis exchange information and interact with the environment based on an arbitration mechanism. Task control is assigned either to the human or the prosthesis, depending on capabilities and intentions. Feedback to human is performed of the through haptics. It is expected to leverage safety and reliability of movements and grasping through automatic failure detection and through intent detection algorithms.

Dynamic changes in role arbitration are meant to either increase the prosthesis level of autonomy at the expense of the human’s authority, or, conversely, increase the human’s control over the shared cooperative activity at the expense of the prosthesis autonomy. By shared autonomy, human and prosthesis divide the subtasks of a complex activity (co-activity, used when the human is unable to actuate the prosthesis and must rely on it carrying out intelligently the communicated intent). Arbitration’s supervisory role:

- Manage latency between amputee and prosthesis

- Increase effectiveness and efficiency of the task

- Improve embodiment and acceptability of the prosthesis.

IntelliMan prosthesis control strategy: environment, prosthesis, sensors communicate with the central part, which processes the commands to send to the prosthesis.

-

Daily-live kitchen activities (EUT, UPC, DLR, UNIBO, UNIGE, IDIAP)

Real-life requirements towards household-aid robots

- Perform a set of consecutive and interconnected tasks on sets of objects – retrieve and place these

- Necessary success rate of 80% (without failure-recovery strategies), 60% for the entire scenario, no more than 5% unforeseen object collisions or damage, in less than 10% failure cases require human assistance due to deficiency of failure-recovery strategies.

Read more …Use case overall objective

Expand robotic manipulation capability in daily-life human-centred (kitchen) environment to perform (kitchen-aid) representative tasks:

- Understanding manipulation task structure of fragile objects and planning complex manipulation strategies with one or two coordinated arms, including in-hand manipulation

- Manipulating objects with movable parts and understanding the underlying kinematics

- Synthesizing different task-oriented grasps for objects with different shape, size and surface properties

- Picking objects in a cluttered scenario which may require physically interacting with the environment with non-deterministic results, ensuring reliability by focusing on the detection of feasibility and stability conditions and on relevant features need to accomplish the task and reusing previously learned methods

- Human-Robot Interaction in teaching of the manipulation actions and in robot-to-human handovers (i.e., understanding whether the human is available to receive the object – maybe he/she is doing other things -, where he/she wants to receive it – he/she may offer a hand, and for which part of the object – a knife must be offered with the handle free) or human-to-robot handovers (e.g., handing over an object impossible to reach because out of the robot workspace)

- Demonstrate the capability of transferring manipulation tasks knowledge from and to other use cases (prosthetic aspects of UC1, manipulation of deformable objects from UC3 and manipulation of soft vegetables and fruits from UC4) and other tasks and applications.

Development platform

- Dual-Arm and Single-Arm TIAGo Mobile Manipulators

- Dual-Arm Universal Robots UR5 manipulator on a mobile platform

- Robot arms extended by parallel-finger grippers

- Tactile sensors for perception during grasping

- Kitchen Mockup equipped with OptiTrack tracking system and 3D cameras.

Defined scenario

Prepare breakfast in a mock-up kitchen by:

- Setting the table, retrieving plates and glasses from the dishwasher

- Retrieving cutlery from drawers and place them on the table

- Pouring cereal into a bowl from cupboard

- Handling soft and deformable objects by selecting pieces of fruit and serving them on the plates.

-

Robot-based assembling of products (flexible manufacturing) (ELVEZ, UNIBO, UCLV, IDIAP)

Real-life requirements towards robot-based product assembling

- Assembling of connectors by intelligent manipulation of small, deformable objects (mainly cables) in interaction with hard objects (connector case)

- Precise interaction control for assembly correctness verification.

Read more …Use case overall objectiv

Enabling the robotic assembly of connectors leveraging on AI-powered manipulation with industrial arms and parallel grippers by:

- Learning the manipulation and picking of small deformable objects with limited trails, such as the cable with metallic terminal located in a collector

- Learning the kinematic relationship and the salient manipulation features between the terminal and the connector case, to insert the terminal with proper orientation and position

- Checking the correct or incorrect execution by evaluating the force profile by means of tactile sensors

- Complete the insertion of all the cables in the connector case a semantic sequence description

- Check the result using both tactile and vision sensors

- Evaluated the capability of transferring the insertion tasks knowledge to other robotic systems.

Development platform

- Dual-arm Universal Robot UR5 collaborative robotic platform

- Robotic grippers: Robotiq Hand-E adaptive grippers and suitable sensorized fingers

- Sensors for cable grasping and evaluation of insertion phase correctness: tactile sensor and ToF proximity or multi-range sensors

- Vision system based on multiple cameras used to detect the location of the objects during the task execution: 2D camera for collecting multiple views for 3D shape reconstruction, 3D camera for depth information reconstruction, endoscopic camera to detect cable axial orientation to perform insertion in connector case.

Defined scenarios

- Connector in a known position, wires grasped from known positions, insertion and checking with a single arm

- Connector in a known position, wires grasped from a collector, estimation of wire pose, insertion and checking with a single arm

- Connector grasped from a known position, wires grasped from a collector, estimation of wire pose, insertion and checking with two arms

- Connector grasped from a collector, estimation of connector pose, wires grasped from a collector, estimation of wire pose, insertion and checking with two arms.

-

Robotic fresh food handling for logistic applications (OCADO, DLR, UCLV, UNIBO, UPC, IDIAP)

Real-life requirements towards robot-based fresh food handling

- Picking loose unpackaged fruits and vegetables – perishable, delicate and easily damaged, different range of stiffness depending on the item’s ripeness, irregular shapes and a range of sizes – from a pile in the storage container and packing those in a small container or punnet

- Handle a broad variety of fruits and vegetables – an extensive spectrum of challenges for robotic grasping and manipulation.

Read more …Use case overall objective

Expand the capability to the robotic pick and place of loose fragile items like delicate fruits and vegetables:

- Study the use case for various objects with increased complexity of shape, fragility and clutter

- Enabled to picking from a dense environment – pile of fruits in the storage container

- Capable of packing in a punnet or a small container to achieve required fill or number of items

- Reduce damage during picking and discard already bruised items

- Learn and apply human picking and packing strategies.

Development platform

to be added soon

Proposed solution approach

to be added soon

Defined scenarios

to be added soon

Outcomes and expected impact

The project has a wide variety of specialized deployment scenarios of manipulation robots in unstructured environments that neither it nor its designers have foreseen or encountered before. The potential for autonomous manipulation application with robots capable to manipulate their environment is vast: hospitals, elder- and child-care, factories, outer space, restaurants, service industries, home environment.

The expected outcomes can be summarized in:

- Broader adoption of AI-oriented methods in robotic manipulation and human-machine interaction for increased portability, affordability, reliability and safety.

- Penetration in industry through safe, fast and easily adaptable AI-powered manipulation systems.

- Increased acceptance of robots by the general population.

- Improved acceptability and reliability of AI-enhanced prosthesis and service robots.

- Rising awareness about benefits of AI-oriented manipulation methods.