Demonstrator

IntelliMan’s heterogeneous set of use cases generates the right mix of challenges and requirements able to produce a general AI-based solution framework for the manipulation problem. This manipulation system must be able to understand which are the relevant skills and structures independently from the object shape, size, weight, surface characteristics, etc., fully exploiting the interaction with the environment and the human, disregarding from the interface type and manipulator structure.

Use Case 1

Upper limbs prosthetics

Demonstrator focuses on three key areas:

Prosthesis VR setup by FAU

Development of immersive virtual environments for a realistic prosthesis-environment interaction based on surface electromyographic signals, used for testing shared autonomy control algorithms as well as aspects of human-machine interaction.

The video shows the interactive control of the MuJoCo Shadow Hand in a virtual PastaBox environment

Incremental Learning for position and velocity control by FAU

Position and velocity control strategies based on incremental learning strategies for participants with and without limb differences.

The video shows an incremental learning strategy being used inside a Target Achievement Control Test (TACT)

The Grip Strength Regulation Setup by Uni Bologna and INAIL

Experimenting with shared autonomy algorithms using tactile information and electromyographic signals to control grip force in a research robotic prosthetic hand, with participation from able-bodied subjects. This allows fine modulation of the grasp strength by balancing control between the human operator and the robot hand.

The video shows participants using the robot hand to achieve specific grasp strengths for both objects with fixed location and for activity-of-daily-living-like tasks, specifically the preparing of a recipe mix. This involved various grasp actions with different strength levels, evaluated by their ability to perform target strengths within defined error zones. Additionally, the final part of the video provides preliminary qualitative testing with testbed and hardware towards the next development and demonstrator: the SHAP test and the Hannes prosthetic hand.

Real-Time Mechanical Stress Reconstruction Using a Piezoelectric Tactile Sensor Integrated into the Hannes Hand by Uni Genova

The video shows the integration of two finger caps encapsulating a distributed sensing system based on piezoelectric materials on the Hannes prosthetic hand. The video also includes a graphical user interface that streams the output of a signal processing algorithm in real time, providing continuous visualization of the reconstructed stress map, and localization of the contact area.

Final UC1 demo at INAIL facilities with patient with amputation, by Uni Bologna, FAU, ETH Zürich, INAIL

This use case demonstrates a shared autonomy control framework for robotic hand grasping, combining user intent estimation, tactile feedback, and adaptive assistance. The user’s velocity intent is decoded from EMG using an incremental learning-based algorithm, and is modulated through a variable scaling mechanism to regulate the opening and closing motion of the robotic hand. Tactile feedback from the robotic fingers is processed through an HMM-based grasping description, which decomposes the grasping process into probabilistically consistent phases. The estimated grasp state and grip strength are used both to adapt the level of shared autonomy and to provide sensory feedback to the user via vibrotactile stimulation.

Use Case 2

Daily life kitchen activities

Reliable robotic manipulation of everyday kitchen objects, as a trade-off between prosthetic aspects and industry-oriented scenarios. It requires various grasps and complex handling strategies, needed to setting and clearing a table.

Evaluation of a plan for high-level pick-up and place: moving objects to a given location based on a generated plan that involves the movement of the robot between two locations, getting the precise position and orientation of the object, and picking and placing them considering their constraints, as shown in the videos below

Vision component inference by Uni Campania

Pose estimation of five daily-life objects of different scales.

Vision component inference by Uni Genova

The video shows the grasp control by means of distributed piezoelectric sensor mounted on the gripper of the Tiago robot.

Complete demo integrating most of the modules: moving objects from one location to another by autonomously generating and executing the set of actions required to perform the task, as shown in the video below:

Demonstrator for Intermediate Validation of UC2 by Eurecat, Uni Cataluna and Uni Campania

The demo shown in the video shows the autonomous execution of a plan to move an object within a kitchen setting from high-level task definition, by means of different modules developed within IntelliMan, namely a high-level plan generation and execution framework (Eurecat), a precise object detection for pose extraction based on DOPE (Uni Campania) and a generator of Behaviour Trees with Flavours for grasping (Uni Cataluna).

Demonstrator for Final Validation of UC2 by Eurecat, Uni Cataluna, Uni Campania, Uni Genova

This demo shows the autonomous execution of a plan to arrange a set of objects within a kitchen, using an architecture combining key modules developed on IntelliMan, namely a high-level plan generation and execution framework (Eurecat and Uni Cataluna), a precise object detection for pose extraction based on DOPE (Uni Campania), and a Dynamic Generator of Behaviour Trees through Flavors and Stable Grasps (Uni Cataluna). Additionally, the robot is equipped with flexible tactile sensors (Uni Genova) to control over grasp force.

Use Case 3

Robot-based assembling of products

Robust handling of deformable linear objects by enabling the robotic assembly of connectors leveraging on AI-powered manipulation with industrial arms and parallel grippers. The use case consists of assembling of connectors by intelligent manipulation of small, deformable objects (mainly cables) in interaction with hard objects (connector case) and precise interaction control for assembly correctness verification.

Scenario 1 by Uni Campania, Uni Bologna and ELVEZ

The connector is fixed in a known position, the wires are grasped from known positions with known poses. The insertion phase and the checking of correctness are required, as shown in the video below.

Scenario 2a by Uni Campania, Uni Bologna and ELVEZ

The connector is fixed in a known position, while the wires are grasped from a collector with a known pose and the pin axial angle is unknown and must be estimated.

Scenario 2b by Uni Campania, Uni Bologna and ELVEZ

In a worst-case scenario, the connector is fixed in a known position, while both the position of the wire between the fingers and the axial angle are unknown.

Human Performing the Task by Uni Campania, Uni Bologna and ELVEZ

The video shows the data collection procedure by means of the reference force/torque sensor during the task performed by human.

Scenario 3 by Uni Campania and ELVEZ

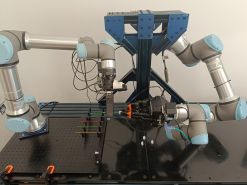

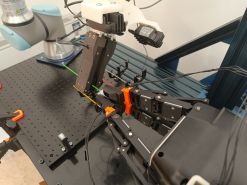

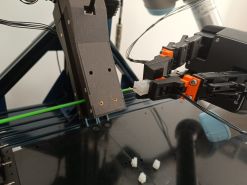

The connector is picked from a predefined location, while the wires are grasped from a specialized collector. A stereo vision system estimates the wire pose, and tactile feedback is used to monitor the insertion phase. The process is executed by a dual-arm platform, where each arm is dedicated to one component (connector and wires, respectively).

Scenario 3

Scenario 4 by Uni Campania and ELVEZ

The assembly process is executed by a dual-arm robotic platform, with each manipulator dedicated to a specific component (connector and wires). A stereo vision system is utilized to perform pose estimation for both the connector and the wires, which are retrieved from a specialized collector. The insertion phase is actively monitored via tactile feedback.

Final UC3 demo at ELVEZ facilities by ELVEZ, Uni Bologna, Uni Campania

Use Case 4

Robotic fresh food handling for logistic applications

Fast-moving sensing-based multi-finger grasping in which the manipulation dexterity is of paramount importance. Fresh food handling focuses on picking loose unpackaged fruits and vegetables from a large storage container and packing into a small container or punnet. There is a broad variety of fruits and vegetables to be handled, and each of those items present a broad spectrum of challenges to the robotic grasping and manipulation. It is required to consider that fresh products are perishable, delicate and easily damaged. Moreover, varieties of fruits and vegetables have different ranges of stiffness depending on the ripeness of the items. The items have irregular shapes and can be of all range of sizes. Besides, grasping multiple objects simultaneously is a unique challenge requiring intelligent hardware and software development.

Fresh food handling use case by OCADO and Uni Genova

Scenario 1: Fresh food handling task of multiple loose phantom objects with 3D printed limes and white polystyrene spheres of different dimensions, with gradually increased level of clutter complexity.

Fresh food Handling Use Case Vision Component by Uni Campania

As a component of this scenario 1, demonstration of the DOPE vision component for recognizing single objects (limes), in a scenario of increasing complexity.

Fresh food Handling Use Case Hardware Evaluation by DLR

Scenario 2: Benchmarking of multi-object grasping with four different end-effectors and with three grasping strategies (top-down grasping with caging, group and grasp to collect multiple items in a pile and cage these for grasping, environment-aided grasp to constrain object movement and perform caging grasp against it).